Matula Thoughts September 6, 2019

Urology at Michigan is a century old

2411 words

One.

The origin of Michigan Urology. The state of Michigan and its sole university had no medical school when Moses Gunn (above) came to Ann Arbor in 1845. Gunn had heard rumors that a medical school might be formed in this small town and moved here after graduating from Geneva Medical College in New York. He came by train in mid-winter with a cadaver in a trunk and began practicing medicine, accruing surgical expertise, and teaching anatomy to aspiring students in the back room of his office.

Gregarious, talented, and confident, Gunn networked with Zina Pitcher and others interested in creating a medical school for the University of Michigan and within three years the school became a reality. Dr. Pitcher, leading the university board of regents, included Gunn among the five founding faculty of the medical school in 1848 and classes began in the fall term of 1850, after a building was constructed. Gunn taught anatomy and practiced a wide range of general surgery, perhaps best reflected in the textbook of his contemporary, Samuel David Gross, A System of Surgery, although that didn’t appear until 1859. Genitourinary surgery was then an important facet of general surgical practice and the first textbook Gross wrote earlier in 1851 was specifically on the topic of genitourinary surgery – A Practical Treatise on the Diseases and Injuries of the Urinary Bladder, the Prostate Gland, and the Urethra. Gunn undoubtedly was familiar with these books of his fellow academic surgeon, at some point in his career.

Genitourinary surgical disorders were necessarily taught and practiced at the University of Michigan since those early days of the medical school in Ann Arbor and Moses Gunn was the starting point, although the actual first moment is unknown. His operation on a man with “phymosis” in a surgical demonstration for medical students is the earliest example we have found of Gunn performing an ancient procedure necessary for men with symptomatic restriction of the preputial aperture. Nothing innovative was offered at that occasion, but it must have been a useful lesson for the medical students in 1866. Gunn by then had moved to Detroit to live and practice, believing Ann Arbor’s medical school should have been relocated there because of its hospitals and larger population. He returned to Ann Arbor, twice weekly by train, to teach by lecture and surgical demonstration, until gong to Chicago in 1867 as professor of surgery at Rush Medical School.

Procedures such as Gunn’s dorsal preputial slit or circumcision for phimosis, paraphimosis, or recurrent balanoposthitis, have been necessary since the earliest days of mankind. More complex interventions, such as lithotomy for bladder stones, had also been performed since well before the days of Hippocrates, who cautioned healers to leave “cutting for stone” to specialists of the time – namely itinerant lithotomists. They were itinerant for good reasons, they didn’t readily want to share their single skill and their clinical outcomes probably mandated short stays in any location. Little information about them exists, aside from Frere Jacques and the nursery rhyme that commemorates him two millennia after Hippocrates.

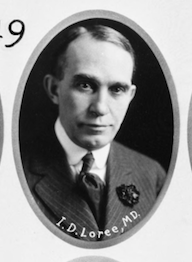

Genitourinary surgical practices muddled along with little change over the millennia until science and technology permitted innovations, safety, and better outcomes in the later 19th century. Moses Gunn, by then in Chicago, witnessed these changes amidst the emergence of a group of surgeons who incorporated new skills, instruments, and the novel tool of cystoscopy into their larger practices. Cyrenus Darling as Lecturer on Genito-urinary and Minor Surgery in 1902, and Ira Dean Loree as Lecturer in Genito-urinary Surgery in 1905 and Clinical Professor of Genitourinary Surgery in 1907 (both pictured below) were the first specifically-designated genitourinary practitioners and teachers at the University of Michigan.

[Above: Darling; below: Loree. Bentley Library]

Two.

Urology and the 20th century. Small clusters of genitourinary specialists accumulated in several locations in North America, notably Boston and New York. Ramon Guiteras in New York was one of these young men and in 1902 he came up with a new word to define the newly re-tooled specialty, partly to differentiate it from the empiric practice of venereology that had been part of the genitourinary domain. Urology, the new word, was not quite perfect semantically, but worked well enough and replaced the older terminology, more quickly in some places than here in Ann Arbor, where the Medical School and University Hospital job titles held on to genitourinary surgery. Both the school and the hospital needed to enter modernity and the new century, which had moved on since the fin de siècle of the 19th century.

Hugh Cabot, a young surgeon in Boston, was among the first to embrace the Guiteras neologism of urology, and his textbook in 1918, Modern Urology, was among the earliest to use the name in a title after the Guiteras text of 1908. A progressive in many ways, although startlingly biased in other dimensions. After more than two years on the Western Front during WWI, Cabot found private practice in Boston unfulfilling and was eager for a career change when he arrived in Ann Arbor around this time of year in 1919.

Cabot hit the University of Michigan like a hurricane and within a decade brought the modernity of urology to the medical school and the hospital. The amateur historian in each of us sometimes defaults to a “before and after” construct, and urology at the University of Michigan truly began when Cabot first arrived in Ann Arbor, in September, 1919. Michigan’s genitourinary surgeons, Darling and Loree, quickly recognized their incompatibility with the new boss and resigned from the medical school leaving Cabot, the urologist, their practices and teaching responsibilities.

Three.

Imagine that world of 1919: World War I was winding down and the Spanish flu was still ravaging North America and Europe. The Great War killed 17 million people, while the influenza epidemic killed 20 million, proving once again that humans don’t really need to kill each other off as other species can do so far more effectively. Prohibition and women’s suffrage were occupying much of the national political conversation. At the University of Michigan President Hutchins was ready to step down but the regents hadn’t found a replacement and the Medical School was at loose ends.

Victor Vaughan had been a transformational figure at Michigan since his starting days in 1874 and assumption of the medical school deanship in 1891. He became a national figure academically though his initial investigations and teaching in biochemistry, physiology, and bacteriology, followed by his medical service during the Spanish American War. The medical school, that Vaughan had effectively stewarded, shined in the 1910 Flexner Report but began to run down, especially during World War I as he spent time in Washington helping manage military medical affairs and was increasingly distracted from duties as dean. By 1919 the chairs of internal medicine and surgery remained vacant, in spite of modest efforts to fill them, and plans for a much-needed replacement university hospital were dormant. [Below: Vaughan portrait by Gari Melchers, also shown here last month.]

The year 1919 was one of deep loss for the Vaughan family, when one of their five sons perished by drowning just before returning from duty in France. Dean Vaughan was notified while in Atlantic City at a meeting of the American Medical Association in June and, after what must have been a horrible pause, collected himself enough to deliver concluding remarks for the session he was chairing at the moment.

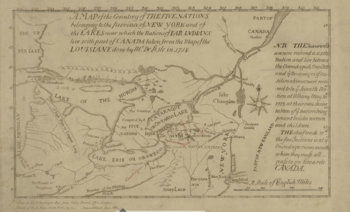

[Class picture 1919]

In Ann Arbor, prior to Cabot, the teaching and practice of genitourinary surgery had been mostly in the hands of Ira Dean Loree, a respected member of the community and one of the 20 local citizens behind the creation of Barton Hills Country Club, that opened in 1919 with its Donald Ross golf course. Loree, Vaughan, and Darling are seen in the UMMS class picture (above) at the end of the 1919 spring term, unaware that Hugh Cabot was about to disrupt their lives. As summer came to an end, Vaughan was resuming life back in Ann Arbor still faced with the two open chairmanships and the deteriorating clinical and educational physical infrastructure of the Medical School. Meanwhile, in Boston, Cabot had returned from duty in France but was frustrated on resumption of his clinical practice. At some time around then, Cabot learned of a unique opportunity in Ann Arbor, and he jumped at it. He had not been on anybody’s radar screen of candidates at that time. Vaughan, in fact, quietly favored his internal faculty candidates Carl Huber and Frederick Novy, according to a personal letter to one of the Vaughan sons in the autumn of 1918.

Four.

Cabot’s decade in Ann Arbor began with a first visit in September, 1919. He came by train and stayed at the new Michigan Union, where Vaughan and President Hutchins housed their major recruits. The first visit impressed the Michigan leadership and impressed Cabot as well, who saw the Medical School as a perfect canvas for his bold ideas that fused the provision of just and medical care to a democratic society, emerging subspecialties, brisk incorporation of new technologies, multi-specialty group practice, and clinical education from full-time salaried academic clinicians. Cabot was an excellent educator, a powerful administrator, a world-renown urologist, and an effective politician who usually got his way. He came to Michigan as professor and chair of surgery, following Gunn and de Nancrede, but unlike them built a powerful surgical faculty known not only for teaching, but also for academic productivity and clinical excellence. He was also predominantly a urologist. Cabot recruited and developed a robust cadre of young faculty, especially distinguished in the surgical fields with Max Peet, John Alexander, Frederick Coller, Charles Huggins, Reed Nesbit, and others who enriched and dominated their emerging subspecialties, winning accolades up to and including the Nobel Prize.

Within a year and a half from his start, Cabot became dean of the Medical School where his accomplishments were extraordinary. While managing day-to-day functions of the medical school and continuing to grow his voice in urology, he presided over the dissolution of the Homeopathic College, the construction of a new University Hospital (the fourth iteration of our hospital since 1869), and the deployment of the first world class cadre of clinical faculty at the University of Michigan.

We intend to elaborate on this story in two parts to mark our centennial. The first part, The Origin of Michigan Urology, will be in print later this autumn and will tell the story of our field and our university up to (and through) the Cabot era. The next part, The First Century of Michigan Urology, will cover the ensuing 100 years up through 2020 and we project its completion in two years as the story evolves. It was a remarkable century.

Five.

Fast forward over an astonishing 100 years from Cabot’s arrival in 1919 to last month in Copenhagen and the CopMich Urologic Symposium. Dana Ohl and Jens Sønksen began a collaboration two decades ago that culminated in this biennial event alternating between Ann Arbor and Copenhagen, where Jens is chair of the surgery department. This Third CopMich Urology Symposium was held west of Copenhagen at the lovely Hotel Hesselet in Nyborg on the seaside of the “Great Belt” a wide strait between Copenhagen and Jutland, connected by the magnificent Øresund Bridge. The three-day symposium (above) covered reproductive urology, urologic oncology, pediatric urology, stone disease, pelvic floor and pain, patient information, psychosexual health, telemedicine, and an amazing new generation of research projects mentored by Dana Ohl and Jens Sønksen. From this collaboration, nearly 100 peer-reviewed publications have resulted. [Below: a.) Jens, Diana Christensen, Christian Jensen; b.) Anne Cameron, John Wei, Mikkel Fode; c.) Helle Harnish, Nis Nørgaard, Yazan Rawashdeh.]

Danish and Michigan faculty produced a superb collection of talks over the 2.5 days and planning is already underway to return this symposium to Ann Arbor in 2021 was given the chance to give one talk about anything I wanted, in addition to assignments of more usual urologic topics. Reverberating from the dozen years of Chang Lectures on Art and Medicine we concluded in Ann Arbor last year, I returned to that theme to talk about the role of art in dealing with the “TMI” (too much information) of our medical world. Our arts compress, abstract, or replicate things artists find beautiful, meaningful, or otherwise worthy and those windows onto the world help the rest of us expand our own windows. Matula Thoughts, What’s New, and CopMich last month provide opportunities to delve into these matters, not from any learned perspective as an art historian, but only from the simpler framework of a citizen and physician deluged by the constant typhoon of TMI. [Below: a.) Mette Schmidt, Cea Munter, Klara Ternov, Marie Erickson; b.) Jens, Hans Jørgen Kirkeby, & Dana; c.) Ganesh Palapattu.]

[Below top: Maiken Bjerggard “Queen of Jutland”, Erik Hansen, Pernille Kingo, Anna Keller. Bottom: CopMich ensemble 2019]a

Postscript.

This is hurricane or typhoon season for much of the world. Cabot may have hit Michigan metaphorically like a hurricane in 1919, but real mega-storms regularly challenge eastern and south central states at this time of year and today Hurricane Dorian is running itself down after a week of devastation and terror. The Waffle House Index comes to mind. This informal metric was conceived of in 2011 after the Joplin tornado when FEMA noticed that two Waffle House restaurants in Joplin stayed open during the storm, eliciting the idea of a measure of community robustness – that is, its ability to function in the face of overwhelming forces. This “index” abstracts from all the noise (all the overwhelming “information” of the hurricane and its effects) some measure of community functionality. The Waffle House, unlike most other restaurants and businesses that close when environmental conditions deteriorate, is reputed to do its best to remain functional for its communities, following the lead of the first responders, police departments, fire stations, and hospitals. The Waffle Health Index, unlike abstruse statistical measures, is simple, understandable, and meaningful to most people. An abstraction of regional disaster to a useful metaphor, or a meme, that brings some clarity to mass confusion and facilitates useful response.

One could hope for similar indicators of biodiversity, local or global environmental integrity, generalized human well-being, or academic health center viability, to give clear appraisals of complex conditions as a basis for appropriate responses. The individual biologic response to threat may be prompt, as we recoil from fire, but the systemic response of the human species to impending disaster is woefully inadequate.

September & centennial greetings,

David A. Bloom

University of Michigan, Department of Urology, Ann Arbor